OpenAI’s new job transition framework is more honest than most, which is maybe why its blind spot is so frustrating.

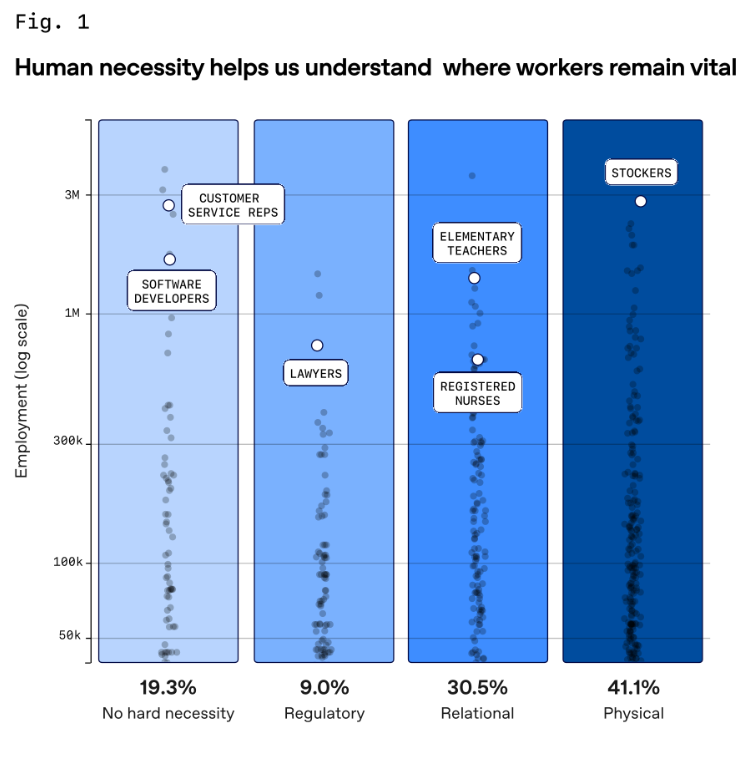

The gates it describes: regulatory, relational, and physical, are not equally durable. When economic pressure gets high enough, gates move. Telehealth exceptions during COVID showed how fast “regulatory necessity” evaporates when incentives shift. Scope-of-practice battles are already playing out in medicine and law, with AI vendors actively lobbying to redefine what actually requires a licensed human. It's happening now.

The relational category is probably the most misread. The framework protects the job title, not what the job is made of. When AI absorbs documentation, diagnosis support, lesson planning, what’s left gets rebundled into something new, and that new thing faces its own assessment. “Relational” isn’t some fixed property of a job. It's just a name for whatever’s left after automation finishes the cognitive parts.

And then there’s the number buried in a footnote: ChatGPT usage runs 3x higher in the most at-risk jobs. Which sounds like people sleepwalking into their own replacement. I interpret it as fear: paralegals who’ve seen what GPT does with contracts aren’t oblivious, they’re scrambling. Some of it is that exposure to risk creates its own urgency in a way that just doesn’t exist for safer roles. And a lot of it probably isn’t voluntary at all: managers mandating adoption, headcount quietly frozen, jobs being restructured around the tool while the org chart stays the same.

The 80% figure tells you where friction lives right now. The 3x number suggests the people inside that friction already know how it ends.

Another important context: OpenAI published this at a moment of scrutiny over AI's labor impact. The quiet exclusion of “human preference” jobs from the headline figure makes the numbers read more reassuring than the data really warrants.