Legal research. Medical imaging. Financial modeling.

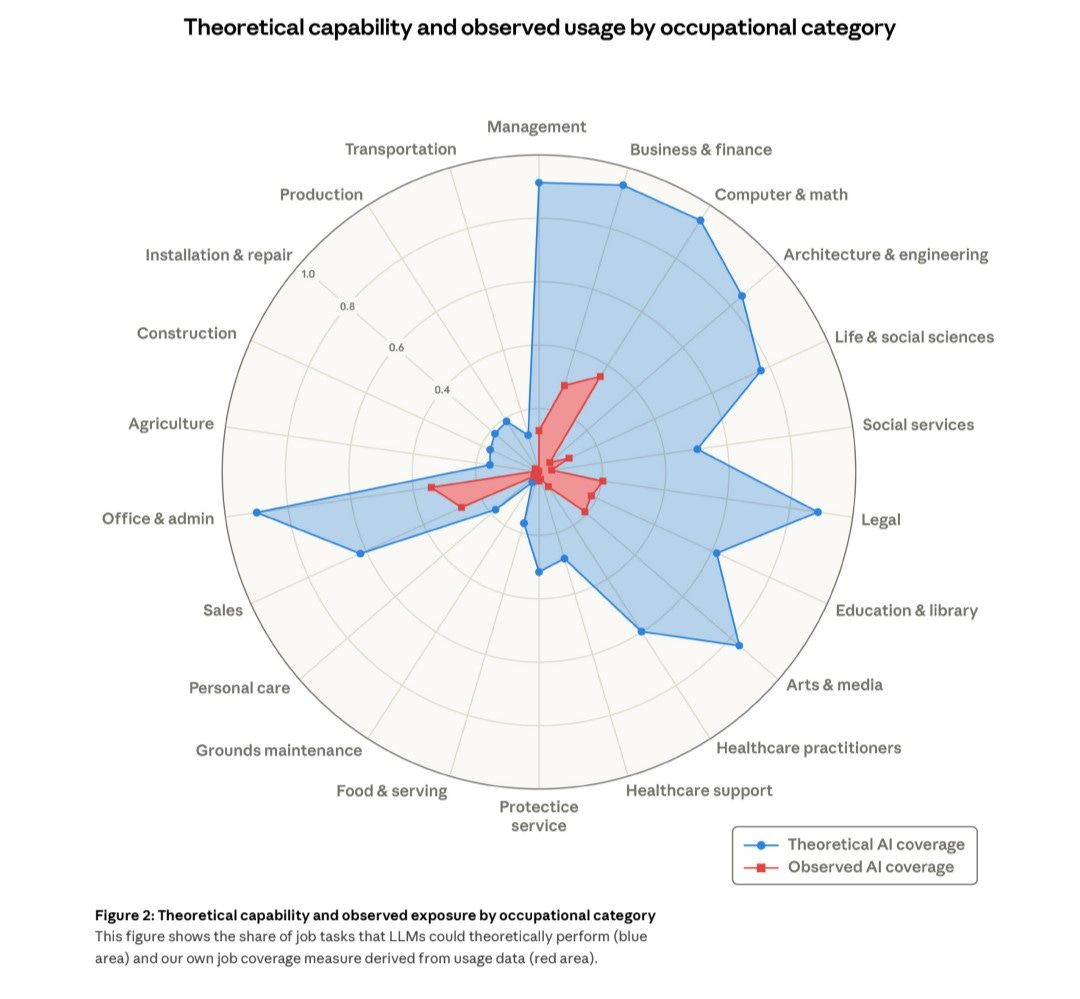

These are precisely the domains where AI benchmarks most impressively, and precisely where adoption lags furthest behind technical capability. According to the very famous radar chart published by Anthropic’s Economic Index research, the professions with the highest AI-automatable task shares show the widest gaps between potential and actual deployment.

The pattern is consistent enough to demand explanation. Here is my working hypothesis of the four friction points:

Verification costs: In routine administrative work, checking AI output takes seconds. In diagnostic medicine or contract analysis, verification requires the same expertise that the tool was meant to augment in the first place. If reviewing AI's work costs as much cognitive labor as doing it yourself, the efficiency case collapses. This may partly explain why office and admin work, despite high theoretical exposure, shows comparatively stronger observed adoption: the verification overhead is low enough that the time savings actually materialize.

Liability exposure: Errors in scheduling software create inconvenience, whereas errors in treatment recommendations create lawsuits. Existing malpractice and fiduciary frameworks assign blame to humans; no equivalent structure governs algorithmic failure. Until liability follows the technology, professionals rationally refuse (or are afraid of) to cede judgment.

Workflow integration: AI tools optimize isolated tasks - and most of the measurement of AI exposure stays at the task level. But professional work bundles tasks, and it doesn't separate them neatly. Drafting a brief is not separable from client consultation, strategic judgment, and court procedure. Slotting AI into one step often destabilizes others. Management occupations, for example, illustrate this well: theoretical exposure is high, but the role is defined by making judgment calls and contexts that sit above AI or any technology can cleanly absorb.

Professional identity and credentialing. Licensing boards, bar associations, and medical credentialing bodies actively constrain which decisions can be delegated and to whom. The credentialing systems that gatekeep entry into high-stakes professions nurtured more critical and hopefully more responsible adoption, but the flipside is they make certain forms of technology adoption that may be good for productivity technically non-compliant. These barriers serve as the structural features of high-stakes work that benchmark enthusiasts consistently underweight.

The most important insight coming from the radar chart offers is not in the blue area, but in the distance between blue and red and the WHY. That distance is a measurement of how much of professional work sits inside institutional, regulatory, and cognitive structures that task-level benchmarks were never really designed to capture.

Organizations betting on rapid adoption should ask whether they’re solving a technical capacity problem or misreading what the data was ever able to tell them.